- Blog

- AI Style Transfer Your Guide to Creating Digital Art

AI Style Transfer Your Guide to Creating Digital Art

Picture this: an artist who can look at Van Gogh's 'Starry Night' and then, almost magically, repaint your family photo with its iconic, swirling brushstrokes and deep blue hues. That, in a nutshell, is AI style transfer. It’s a fascinating process that takes the subject of one image and marries it with the artistic style of another.

So, What Is AI Style Transfer Really Doing?

At its heart, AI style transfer isn't just a fancy Instagram filter that slaps a color overlay on your picture. It's a much deeper, more intelligent process where an algorithm deconstructs two different images to create something entirely new. Think of it as a creative collaboration.

This process needs two key ingredients to work.

First, you have the content image. This is your "what"—the foundational subject. It could be a portrait, a product shot, a landscape, or even a video clip. The AI looks at this image to understand its core structure, the shapes, the objects, and the overall layout.

Second, you have the style image. This is the "how"—the artistic blueprint. It provides the aesthetic DNA for the final output, dictating the color palette, textures, brushstroke patterns, and general vibe. The AI isn't concerned with what is in the style image, but rather how it looks and feels.

The real magic happens when the AI separates the "what" from the "how." It holds onto the recognizable forms from your content image but completely reimagines the surface, painting it with the artistic soul of the style image.

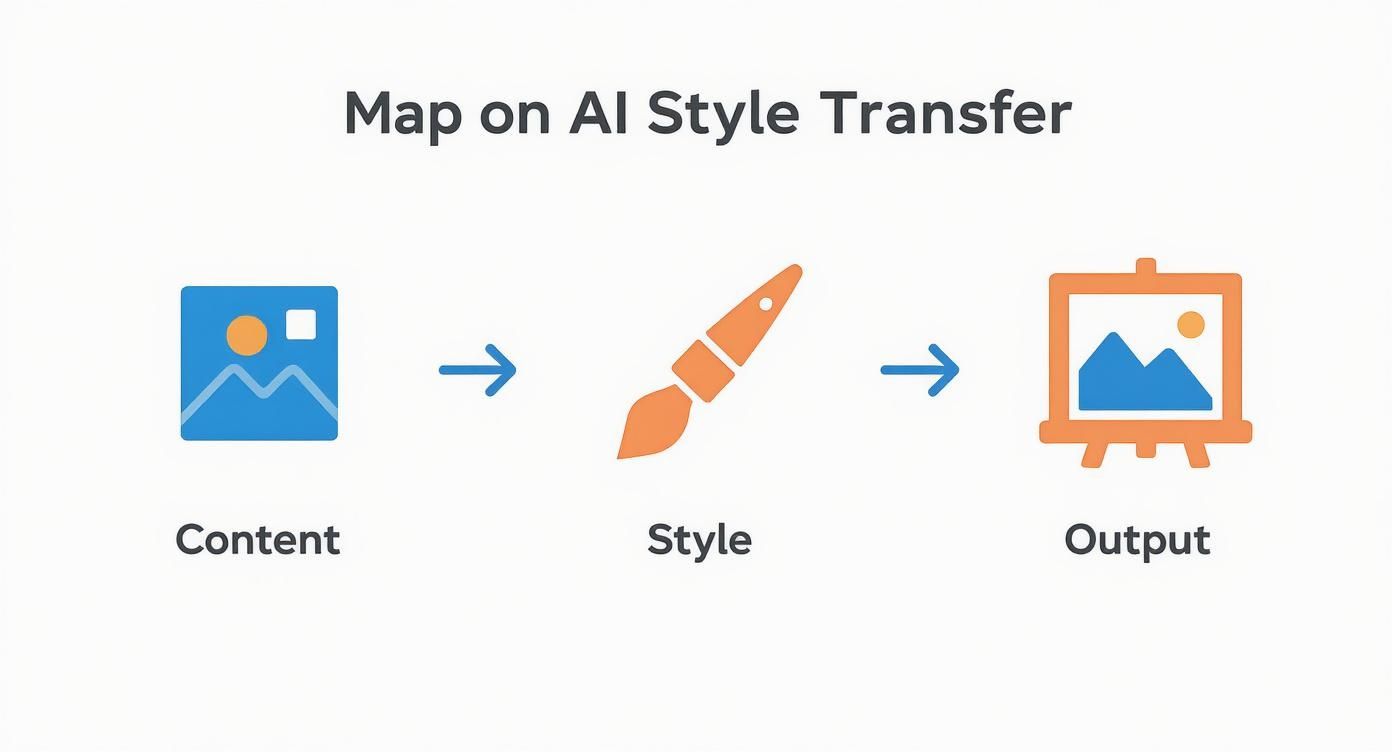

To make this crystal clear, let's break down these core components.

Core Concepts of AI Style Transfer at a Glance

This table offers a quick summary of the essential parts of the AI style transfer process and what each one does.

| Component | Role in the Process |

|---|---|

| Content Image | The source of the subject and composition. It defines what is being depicted. |

| Style Image | The source of the artistic aesthetic. It defines how the final image will look and feel. |

| AI Algorithm | The "digital artist" that separates content from style and then merges them into a new image. |

| Final Output | The synthesized artwork that combines the content of one image with the style of another. |

Understanding these distinct roles is the first step to mastering the technology and getting the results you want.

A Genuine Breakthrough for Creatives

This ability to pull apart and recombine visual elements was a massive step forward for creative AI. While it might sound like something out of a sci-fi movie, the journey to practical tools really kicked off with a groundbreaking research paper published in 2015. That paper sparked a huge wave of excitement and marked the moment AI style transfer started moving out of academic labs and into the hands of artists and creators.

The algorithm at the center of it all is solving a complex puzzle. It's trying to figure out how to alter the colors and textures of an image to match the style reference as closely as possible, all while making sure the original subject doesn't get distorted into an unrecognizable mess.

To get a better feel for the technical side of how AI learns and applies these visual traits, diving into a practical guide to Stable Diffusion's image-to-image capabilities is a great next step. Having that foundational knowledge is super helpful before we jump into the specific technologies that make all this possible.

The Technology Powering Creative AI

To really get what makes AI style transfer tick, you have to peek under the hood at the different "engines" driving the process. Each one has its own unique way of seeing and blending art, giving creators a whole toolbox of options. It's not about finding the "best" one, but about picking the right tool for the job.

The whole thing kicked off with Neural Style Transfer (NST), the granddaddy of the technique. Think of it as a meticulous art historian. NST uses a type of AI called a Convolutional Neural Network (CNN) that’s trained to see patterns in images, a lot like how our own brains work.

First, the AI scans the content image to get a blueprint of its structure—the basic shapes, objects, and lines. At the same time, it studies the style image to learn its artistic DNA—the textures, color palette, and brushstroke patterns. Then comes the hard part: it painstakingly rebuilds the content image, pixel by pixel, to reflect the style’s aesthetic while making sure the original scene is still recognizable.

Generative Adversarial Networks (GANs)

Then came a more competitive approach: Generative Adversarial Networks (GANs). Picture a high-stakes duel between two AIs. One, the "Generator," is like an art forger, desperately trying to create a new image that perfectly marries the content and the style. The other, the "Discriminator," plays the role of a seasoned art critic, trying to tell if the image is a forgery or the real deal.

This constant back-and-forth pushes the Generator to create stunningly realistic and cohesive images. Every time the Discriminator spots a fake, the Generator learns from its mistake and tries again, only better. This process, repeated millions of times, results in images that are not just styled, but often incredibly detailed and coherent.

Modern Diffusion Models

The newest and arguably most powerful engine in the garage is the Diffusion Model. This method has an almost sculptural quality to it. It starts by taking your original image and systematically adding layers of random visual "noise"—like TV static—until the picture is completely gone.

Then, the real artistry begins. Guided by the style image and any text prompts you've provided, the AI carefully reverses course. It intelligently strips away the noise, step by step, "denoising" its way back to a clean image. In this reconstruction phase, it essentially carves out a brand-new picture that perfectly fuses the content's structure with the artistic style you wanted. The results are exceptionally high-quality and offer an amazing degree of control.

This diagram helps visualize how a content photo and a style reference are combined to create a final piece of artwork.

The process shows that the final image isn't just a simple filter slapped on top; it's a true synthesis of two separate visual inputs. If you want to explore the wider world of AI tools for creating visual content, it's worth checking out some of the best AI image generator tools available today. Each of these technologies—NST, GANs, and Diffusion models—gives us a different path to unlocking our creativity and shaping the future of digital art.

How Style Transfer Works for Video

Taking an artistic style and applying it to a video is a whole different ballgame compared to styling a single photo. You can't just treat the video like a big stack of individual images and process them one by one. The real magic—and the biggest headache—is making sure the style flows seamlessly from one moment to the next. This is what we call temporal consistency.

Picture this: you're watching a video where the style seems to have a life of its own, but not in a good way. The texture on a character’s jacket boils and shifts, colors flicker, and brushstrokes dance around randomly. That distracting, jittery effect is a classic sign of style transfer gone wrong, and it happens when the AI treats each frame as a completely separate art project.

The model doesn't automatically know that frame 101 is just a fraction of a second after frame 100. Without that context, it just paints each one from scratch, creating a visual mess that pulls the viewer right out of the experience.

The Importance of Temporal Consistency

The fix is to get the AI to think about the video as a whole. Modern video style transfer tools are designed to maintain a stable, coherent look over time. When a car drives across the screen, the painted texture on it should stick to the car, not shimmer in place while the car moves underneath.

A great video style transfer isn't about painting a single frame. It's about choreographing a style across an entire sequence, making sure it respects the natural flow of motion.

To pull this off, the technology has to be much smarter. Some models analyze motion vectors—the data that shows how pixels are moving from one frame to the next. By understanding this movement, the AI can apply the style in a way that feels organic and locked into the scene.

Comparing Image and Video Demands

As you can imagine, the technical horsepower needed for video is on another level. You’re not just dealing with more data; you’re wrestling with the added dimension of time, which requires much more sophisticated algorithms.

Here’s a look at how the challenges stack up.

Image vs Video Style Transfer Comparison

When you move from a static picture to a moving one, the rules of the game change entirely. The following table breaks down the core differences in what's required for each.

| Aspect | Image Style Transfer | Video Style Transfer |

|---|---|---|

| Primary Goal | Apply a style to a single, static image. | Maintain stylistic consistency across thousands of moving frames. |

| Key Challenge | Balancing the original image's structure with the new aesthetic. | Preventing flicker, shimmering, and other visual artifacts over time. |

| Technical Need | High-quality single-frame processing. | Motion tracking, optical flow analysis, and temporal smoothing. |

| Common Issues | Loss of important details or unnatural-looking textures. | Distracting visual noise, inconsistent colors, and unstable patterns. |

This comparison really shows why specialized tools are so important for video. Platforms like Auralume AI are built specifically to tackle these video-centric problems. Features designed for motion and temporal coherence aren't just bells and whistles—they're fundamental to creating professional-looking videos that draw people in instead of pushing them away.

Putting AI Style Transfer to Work

It’s one thing to understand the tech, but it’s another thing entirely to see AI style transfer out in the wild. This isn't just a cool party trick for programmers; it's a genuinely powerful tool that artists, marketers, and developers are using to save time, grab attention, and create visuals that were once impossible.

We've moved well past the novelty phase. Style transfer is now solving real creative and business problems, and it’s about so much more than just making a photo look like a famous painting.

Whether it’s generating a unique art collection or launching a marketing campaign that people actually remember, the applications are as diverse as the styles you can imagine.

For Artists and Content Creators

Digital artists have really embraced style transfer to create entire series of works that all share a consistent, unique look. Think about it: instead of painstakingly recreating a specific aesthetic across dozens of pieces, they can apply their signature style to new content almost instantly. It completely changes their workflow.

This means they can experiment and build out large, cohesive collections for their portfolios or for sale on digital marketplaces much faster. The AI acts like a tireless studio assistant, perfectly mimicking the desired style so the artist can stay focused on the bigger picture—the composition and the idea.

By automating the stylistic part of the work, creators can prototype visual ideas at a speed we've never seen before. They can explore ten different directions without getting bogged down in the manual effort of rendering each one.

For content creators, this is all about building a killer brand identity. Imagine a YouTuber who can stamp all their video thumbnails with a consistent, stylized look. Suddenly, their content becomes instantly recognizable in a crowded subscription feed. That kind of consistent branding is gold for building an audience that sticks around.

For Marketing and Advertising

In marketing, the first battle is always for attention. Let's be honest, most corporate videos and standard product photos are just visual noise. AI style transfer gives marketers a way to cut through that noise by turning generic content into something that makes people stop scrolling.

A mundane product demo can be completely reborn with an animated or painterly style, transforming it from a dry tutorial into a captivating story. This works especially well on social media, where visually unique content consistently gets higher engagement. A campaign for a new coffee brand, for example, could use a warm, impressionistic style to make its ads feel cozy and artisanal.

Here are a few other places it's making a huge impact:

- Game Development: Indie game developers can generate stylized textures and assets, building a unique visual world without needing a massive art department. A single artist can prototype the entire look and feel of a game.

- Filmmaking: Directors and VFX artists use it to prototype different looks for scenes without blowing the budget. Before they sink money into expensive post-production, they can test whether a scene works better with a gritty graphic novel aesthetic or a classic film noir vibe.

These real-world examples show just how flexible this technology is for anyone working on a visual project. Platforms like Auralume AI are designed to make these capabilities accessible, putting the focus back on the creative vision, not the technical headaches.

Your Workflow for Professional Results

Getting stunning results from AI style transfer isn't about luck—it's about having a solid, repeatable workflow. Once you understand the theory, it's time to put it into practice. That means knowing how to prep your assets and which knobs to turn to get the look you're after. This playbook will walk you through the essential steps to create truly polished work.

Everything starts with your source material. The inputs you choose are the foundation for the entire piece, and if you start with weak ingredients, you’ll almost always get a disappointing result.

For your content image or video, look for strong compositions and subjects that are easy to identify. A clear focal point gives the AI a solid structure to work with. When picking your style reference, find an image with a bold, consistent aesthetic. A muddy, visually confusing style will just confuse the algorithm.

Fine-Tuning Your Artistic Vision

With your content and style images ready, the real creative work begins in the control panel. This is where you'll mix and match the two inputs to find that perfect blend. The two most important settings you'll see are typically labeled "style weight" and "content weight."

It helps to think of them like faders on a mixing board:

- Style Weight: This dial controls how intensely the style is applied. Crank it up, and your output will look much more like the style reference, but you might lose some detail from your original content.

- Content Weight: This controls how much of the original structure is preserved. A higher value keeps your subject recognizable but can water down the artistic effect.

Finding the right balance is all about experimentation. Early research on neural style transfer parameters showed that optimal results often came from specific ratios, like a style-to-content weight of 50:100 or 200:100. If you're curious about the technical side, you can explore the history of artificial intelligence on aiartkingdom.com to see how these settings were developed.

The perfect setting is a dance between artistic expression and structural clarity. Your goal is to find the sweet spot where the style enhances the content without overpowering it.

Upscaling and Maintaining Motion

Once you've nailed the artistic blend, the final steps are all about polish and performance. Most style transfer tools generate a low-resolution preview first to keep things fast. To get a crisp, high-definition final product, you’ll need to upscale it. Good upscaling tools intelligently increase the resolution without adding blur or digital artifacts.

For video, the most critical final step is handling motion. Always make sure your platform's temporal consistency settings are switched on. This is what keeps the style "stuck" to the objects in your scene as they move, creating a smooth, natural look. Without it, you get that telltale flickering and jitter that screams "amateur." Mastering these final touches is what separates a quick experiment from a captivating, professional-grade visual.

Common Questions About AI Style Transfer

Diving into AI style transfer for the first time usually brings up a few key questions. It's a powerful technology, but getting a handle on the details is what separates a good result from a great one. Let's walk through some of the most common things creators ask when they're just starting out.

Think of this as your quick-start guide to moving from "what if?" to "watch this."

Can I Use My Own Images as a Style Reference?

Absolutely. In fact, this is where the magic really happens. While most tools offer a library of classic styles—think Van Gogh or Monet—the real creative freedom comes from using your own images.

You can upload pretty much anything with a distinct visual vibe:

- A photograph you took that has a killer color palette.

- A digital illustration with a specific brush texture you love.

- Even a simple geometric pattern or a single, striking frame from your favorite film.

The AI doesn't just copy-paste. It breaks down the core elements of your reference image—the colors, the textures, the patterns, the "feel"—and then intelligently weaves them into your original photo or video. For the best results, choose a style image with a strong, clear aesthetic that you think will play well with the subject of your content.

What Is the Difference Between AI Style Transfer and a Filter?

This is a big one, and the difference is night and day. A standard filter, like the ones on Instagram, is basically a simple overlay. It applies a uniform set of adjustments—changing brightness, contrast, or color values—across the entire image, without any real understanding of what it's looking at.

AI style transfer isn't an overlay; it’s a genuine artistic re-imagination. It uses a deep neural network to look at two things separately: the "content" (the shapes and objects in your photo) and the "style" (the aesthetic of your reference image).

It then rebuilds your image from scratch, brushstroke by brushstroke. The AI applies the style's textures and color schemes in a way that actually respects the underlying structure of your original content. It’s a true fusion, not just a colored lens.

Are There Legal Concerns with Using an Artist's Style?

This is where things get a bit gray, and it’s a hot topic in both the legal and creative communities. An artist's general "style" isn't something that can be copyrighted. However, using AI to create something that looks almost identical to a specific piece of work by a living artist—especially without their permission—is ethically tricky.

It raises real questions about originality, artistic credit, and whether the original artist is being fairly treated. From a legal standpoint, if your final output is considered "substantially similar" to a specific copyrighted artwork, you could be heading into infringement territory.

To stay on the right side of this, here’s a good approach:

- Stick to public domain artists. Works by artists who have been deceased for many decades are generally fair game.

- Create your own style references. Use your own photography, digital art, or even physical textures to build a unique style library.

- Mix and match. Instead of directly cloning one artist, pull inspiration from multiple sources to create something entirely new.

This lets you be incredibly creative while still being respectful of other artists and their hard work.

Ready to turn your creative vision into reality? Start creating stunning, cinematic videos from simple text, images, or ideas with Auralume AI. Explore the future of video creation at https://auralumeai.com.